How to improve reliability in software engineering

Reliability is essential to embedded software. And it can become crucial if you work in a safety-critical space or when devices operate in inaccessible locations.

What is software reliability?

Software reliability describes the probability of a product operating without failures for a specified time in a specified environment.

The conditions embedded systems work in can vary widely. Repairing due to system failure is relatively easy when something is accessible, such as a system in a car engine. But a deep-sea submersible or infrastructure? Failure can be far trickier to handle, so good reliability is critical.

There are numerous styles of assessment that can help uncover those failure points. Still, they don’t always give you a clear path to discovering them as a project progresses or processes to help fix or prevent them in the first place.

Read on to see how Lean-Agile and Agile principles help improve overall product quality, resulting in fewer failures.

Types of reliability

There are common types of reliability failures:

- Does the software behave as specified?

- Has an undefined “rainy day” scenario occurred?

- Is there a usability “rainy day” failure?

- Is there a performance failure? For example, slow response time, poor battery life, or exceeding bandwidth on a comms protocol?

- Does it have perceived reliability from the users’ perspective? Meaning it does not work how users expect it to work. It could be technically excellent, but the users do not trust it.

Software reliability metrics

You can measure reliability using metrics such as mean time between failures (MTBF) for repairable systems. And for non-repairable systems, it’s referred to as mean time to failure (MTTF).

In both cases, you use formulas to set parameters, and in MTBF it’s for how much time you expect to pass between failures. For MTTF, your benchmark is how much time should elapse before a system-ending failure occurs.

Using those pre-set benchmarks, you measure product reliability during testing and after sale.

A grey area

Sometimes, it is unclear if an issue is a bug or an unspecified behaviour. Bugs can fall into the following categories:

- Implementation error: A defect that varies from the specified behaviour.

- Adding the wrong feature: A misunderstanding caused an incorrect thing to be implemented. Agile practices like TDD and BDD can mitigate this.

- Incomplete features are either an accident or a feature being added iteratively.

- Change request: It might not work as desired but as specified. It is not technically a bug, and the requirements need updating.

An example of a confusing reliability issue might be that the specifications say a device must stay level when used. However, in user trials, the users intuitively pick it up. It works as designed, but there is a risk that it might not correctly function when users use it in an unexpected way.

What are the risks of poor reliability?

The risks depend on the application. For most products, there will be risks associated with damage to reputation and brand. There will be repair and maintenance costs. And there will be costs from the loss of functionality.

Specific devices may have other risks.

For instance, it may mean it can no longer function safely in safety-critical products. For medical devices, there could be harm to the patient or operator if the device fails. In a vehicle, it might be airbags failing that could result in injury to the driver or passenger during normal vehicle use or an accident.

There may be additional repair challenges for devices operating in a tricky environment, such as a sterile lab, arctic tundra, a desert, underwater, or space. You will need to plan how to do an update or repair if the device itself is inaccessible.

(This talk below, from Bluefruit colleague Matthew Dodkins examines much of what’s been discussed here.)

Key factors that improve reliability

Requirements

Unclear or unhelpful requirements can cause reliability issues. They might not meet the users’ needs, or developers might misinterpret them. To prevent this, do requirement reviews and analysis before making changes.

Using Behaviour-Driven Development (BDD) helps clarify the meaning of the requirements before implementation. At the initial stage, when specifying behaviour, product and development teams discuss what the product should do with examples and ask questions. Doing this helps clarify the requirements and share understanding.

Users can test the software produced at the end of each Agile sprint. Early testing ensures that teams are building the “right thing.” Often, users identify reliability issues during this testing.

Whatever method you use to record requirements, it’s essential to ensure they use a universal language that development and testing teams and stakeholders feel comfortable with. And you should put them in a version-controlled document so that people don’t get tripped up if any requirements change during development.

Testing

Testing can increase confidence in the product’s reliability—for example, performance, regression, and exploratory testing. Testers can catch and raise issues before release.

User testing can identify common issues that have slipped past internal testing. Customers do not always use the product as you think they will. Here, UX techniques can help uncover problems that testers might miss.

Testing alone does not guarantee that there is quality, but it performs a vital role. Code quality affects reliability.

Design reviews

Design reviews involve examining proposed design changes to ensure they make sense and do not accidentally affect other areas. Sometimes, just having the conversation can prompt other ideas for better ways to do it.

Code quality practices

Code reviews can help improve quality. Better quality code often has fewer defects and improved reliability. Does the code do what it is supposed to? Is it written according to coding standards? Easily maintainable? Are performance requirements met? Often, passing regression tests is required before code changes can be included. Passing regression tests helps prevent unintended bugs in other areas.

Using code quality standards improves the readability and habitability of the codebase. It is easier for other developers to understand what the code does and to make changes. If there are confusing or over-complicated areas of code, this can increase the risk of accidentally introducing defects.

Two coding techniques introduced in Agile can also help improve code quality.

Pairing or ensemble working (also called “mobbing” or “mob programming”) on a coding problem is encouraged by Agile. Whilst one person writes the code, the others involved observe and discuss. It can function as a live review, quickly spotting suggested improvements and typos.

Test-Driven Development (TDD) helps developers clarify the intent before implementing the solution. The developer understands the requirements and writes a failing test before adding the code to make the test pass. TDD reduces requirement-related errors that can make the product harder to use. It also encourages only the necessary code to be added to pass the test and implement the feature. With more concise code, there is less risk of adding defects.

Using defensive coding techniques can improve reliability by avoiding assumptions and trying to catch any failures early. Examples include:

- Agreements about how the different software modules will interact.

- Fixed versions of third-party software to prevent external updates from causing issues.

- Approaches for handling memory overflows or resource access issues.

Developers can use assertions in the code to check that the product is ready to complete an operation before attempting it.

Risk assessments

At regular intervals during development, assess for unintended consequences. You could run an assessment at the start of the project and then review it regularly. It could also involve reviewing metrics such as the number of defects, the mean time to failure, or performance results.

Significant design phases might also need risk assessments. Will this change impact any other areas of the code?

Perform product risk assessments to validate what you are making. Are you making something that meets the users’ needs? Is it high quality?

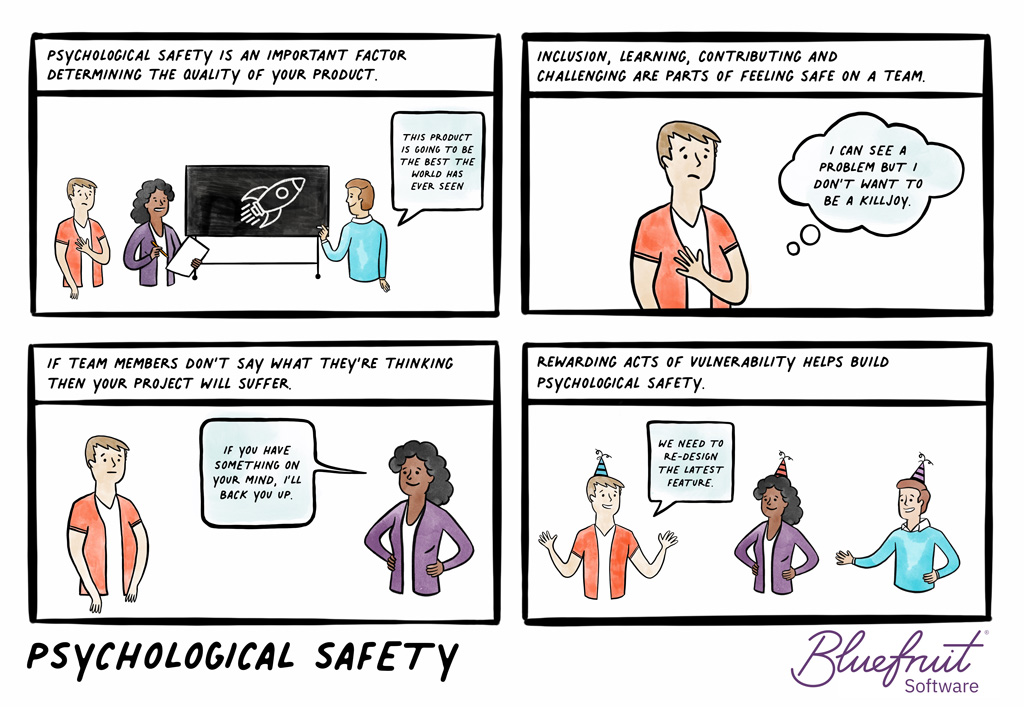

Also, perform project risk assessments, such as the psychological safety of those in the product teams and communication effectiveness.

Fault Recovery

Segmentation:

Especially in safety-critical embedded projects, you will want to isolate any failing modules so that the rest of the device can operate safely.

Use defensive coding as much as possible to reduce assumptions. During development, add recovery features and watchdogs for embedded software projects. In the main loop, “kick” the watchdog to catch hung threads. If needed, you can use more sophisticated watchdog strategies.

Plan a way to update the device to fix any issues the easiest way possible.

Failure Mode Analysis:

You can use Failure Mode Effect Analysis (FMEA) to identify the possible failure points of a system and the effects of failures. However, there is a risk with FMEA that you get a false sense of security. You think you have considered all the risks. However, you might still be missing some because FMEA still relies on you and your team’s collective experience and knowledge. If something is possible outside of that, there’s a good chance the issue could be missed and unresolved before release.

Basically, FMEA can’t help you uncover unknown unknowns. (The things you didn’t know, you didn’t know at the start of a project.)

And if you’re relying on FMEA for risk analysis in medical devices, you’ll fall short of ISO 14971. The standard here needs you to consider risks that aren’t just failure modes.

Compliance and Assurance

Compliance procedures reassure stakeholders that the product’s reliability and quality are good. However, compliance alone does not guarantee reliability. But it will help. Following something like TIR45, which integrates compliance and development activities, can improve quality.

When creating processes, ensure you also consider how to get the best communication within a team and support psychological safety.

Psychological Safety

Teams need to feel empowered to give feedback when they find problems. If there are fixed deadlines for MVPs (Minimum Viable Products), or if every feature is a must-have, there can be pressure not to delay the release. People will be reluctant to report this.

“People affect the reliability of the product.”

Agile adds the benefit of empowering the team to make those decisions is vital for quality and reliability.

Example of reliability: battery lifetimes in devices

Electronic devices often need to rely on battery lifetimes being adequate. Sometimes, it’s impossible to charge up or replace the battery when it runs out of charge.

Early in product development, before the complexity gets too high, you might not get accurate predictions from battery testing.

As more functionality gets added, the power usage increases and the battery will drain faster. You can combat this by evaluating the battery level every sprint to monitor the usage and predict battery lifetime. Your team can include battery metrics in the sprint report to help keep awareness of these changes.

Honing reliability

High-quality testing approaches, coding practices, and empowerment all play a part in creating successful products. Reliable software happens because of producing better quality products.

What steps you might need to take for your device will depend on the potential harm failure could cause.

Written by Jane Orme.

Further reading

Are you looking to develop a new product or have an existing one?

Bluefruit Software has been providing high-quality embedded software development and testing services for more than 20 years. Our teams of experienced engineers, testers, and analysts have worked with a diverse range of clients and industries, including medical, industrial, scientific instruments, aerospace, automotive, consumer, and more.

We can help you with software development at any project stage, ensuring quality, reliability, and security. Contact us today to discuss your software development needs.

Did you know that we have a monthly newsletter?

If you’d like insights into software development, Lean-Agile practices, advances in technology and more to your inbox once a month—sign up today!

Find out more