Edge AI development for embedded systems

AI today doesn’t need the cloud: welcome to edge AI

Embedded devices are getting smarter. Thanks to advances in AI and machine learning, product teams can now bring intelligence directly to the edge, without depending on the cloud. From predictive maintenance to real-time decision-making, these new capabilities are transforming what products can do for their users.

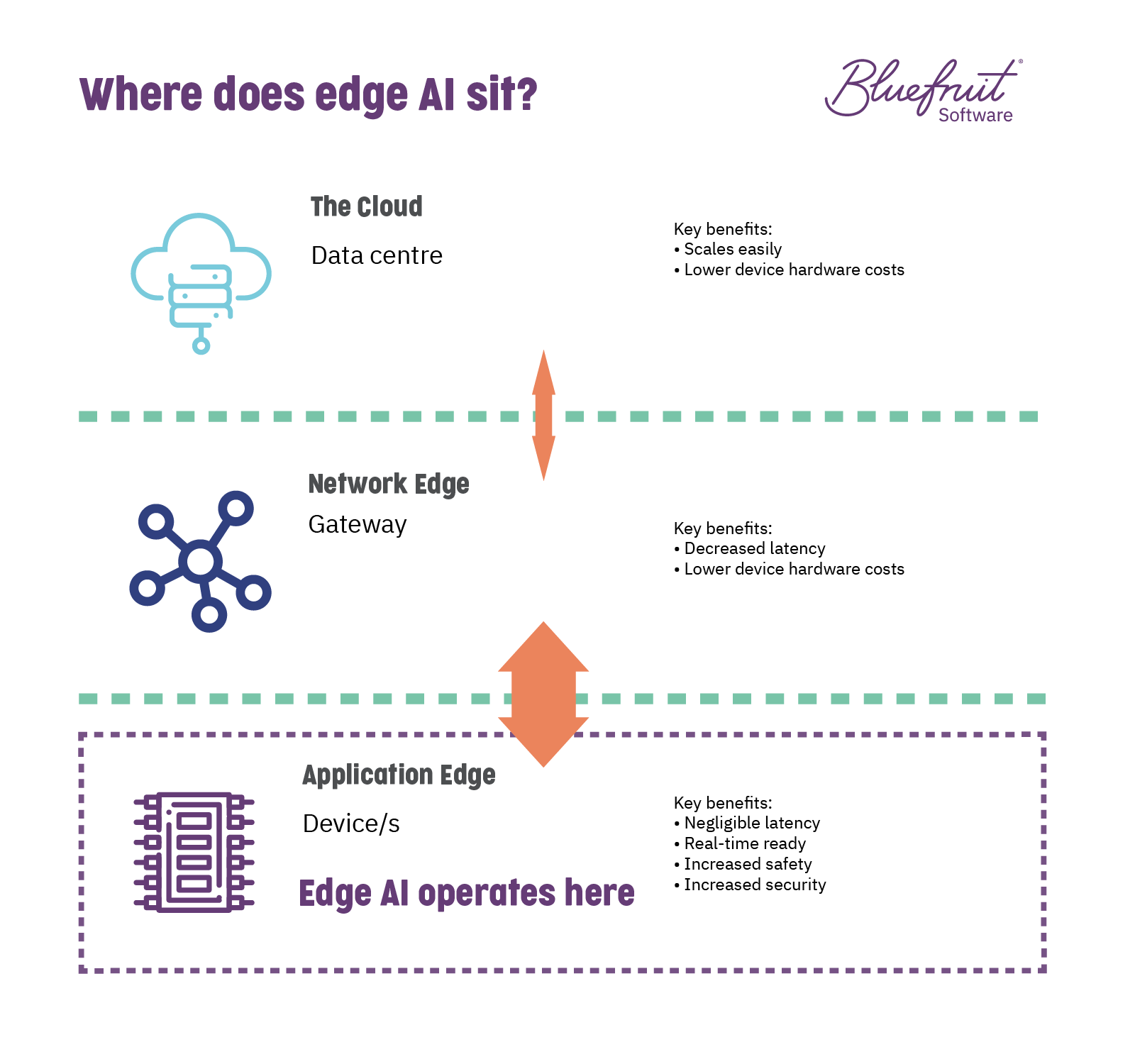

Edge AI sits directly within the device as part of system software. It benefits from not needing a constant network connection to operate, making it more reliable, secure, and cost-effective.

Microcontroller architectures are evolving, supporting edge AI on embedded systems, and making the most of the limited computing resources there. From ARM, the Cortex-M55 core and Ethos-U55 AI accelerator’s development is pushing forward AI in resource-constrained environments. Chip manufacturers like STMicroelectronics, Microchip, NXP and more, are directly enabling machine learning-based AI on their microcontrollers.

With hardware support for AI growing, including tools for those working in edge AI, it means product teams can start exploring the benefits of edge AI, namely, how specific AI configurations can operate in real-time without the need for a constant internet connection.

In skilfully automated intelligence, edge AI can add value to products that need to go further than competitor offerings. Getting that value happens when AI is implemented by a team who understands both machine learning and embedded software requirements.

Intelligent systems that reduce waste, balance risk, and enhance quality

As digital transformation continues across organisations worldwide, many are considering or reconsidering where AI can improve overall efficiency and reduce risk and costs.

Bringing machine learning to products offers an advantage to businesses looking to develop new products or evolve existing product lines that stand out in the marketplace. By implementing edge AI, it’s possible today to create embedded systems that offer:

- Intelligent automation.

- Non-intrusive/sensorless diagnostics.

- Pre-emptive guidance.

Delivering features like these help end-users to meet the demands of doing business more effectively today and tomorrow.

Why use edge AI in an embedded system? What can it do for my product?

Adding edge AI to an embedded device can be a great fit in a lot of different scenarios.

What value can AI combined with embedded software engineering provide?

AI has the potential to:

- Enable new forms of human to machine interface.

- Improve maintenance and facilitate remote monitoring through sensors and virtual sensors.

- Provide intelligent and automated operational and fault reports.

- Decrease human error in safety-critical situations or extreme environments.

- Automate dangerous or laborious processes.

- Intelligently automate ordering of new parts, through tracking device condition and efficacy.

- Augment existing human operator actions to improve accuracy and safety.

- Help support 24hr manufacturing processes with monitoring for any faults or system failure.

- Provide an additional level of quality monitoring and reporting for safety-critical products.

And depending on how you apply machine learning to your product, products can benefit from this functionality, whether it’s diagnostic or pre-emptive, in a non-intrusive way.

(Read more about how edge AI is a perfect fit for Embedded systems and devices.)

Is it challenging to bring edge AI to embedded devices?

Greenfield projects will always be ideal candidates for adding AI, but that doesn’t rule out existing products.

Depending on existing hardware and software, it’s possible to bring edge AI through software and often without adding additional sensors by creating virtual sensors using AI.

Using software to add edge AI also has the benefit of minimal interruption to production and won’t increase costs outside of software development, testing and maintenance.

Want to see edge AI in action? Check out this video of our AeroSpace Cornwall funded R&D project “Audio Classification Equipment (ACE)”:

Why Bluefruit Software for AI-enhanced products?

Over the past 20 years, Bluefruit Software has been developing embedded software for a range of embedded systems across medical, aerospace, and industrial sectors. Several of our recent projects have focused on using AI in embedded systems, including remote engine monitoring using AI and our AeroSpace Cornwall funded R&D audio classification project (ACE) that examines the use of edge AI in embedded devices.

Our AI experience spans both cloud and edge and includes:

- Machine learning and neural nets.

- Sensorless diagnostics, through the use of virtual sensors.

- Image and audio recognition.

- Compliance in AI, including living documentation.

- AI model training.

- Enhancing AI data sets for training.

- Remote systems monitoring.

Our clients rely on us for compliance-ready, high-quality, innovative embedded software development, and release schedules that can be depended on.

Knowhow that works with your needs

Do you have a software project that needs experienced embedded software engineers, testers and quality experts? Then why not get in touch with Bluefruit Software today.

![]()

How do we make embedded software development work? Through our processes, experience and skills.

❝ Paul and his team have worked with us on a number of projects and bring an extra dimension to software product development in terms of their commitment and technical expertise. ❞

❝ A 'can do' approach shines through on each project, with customer satisfaction very much at the top of the list. ❞

❝ With years of working together, we regard Bluefruit as a valued extension of our internal product development team. ❞

❝ Bluefruit provide a professional, innovative and technical team in a very friendly environment. They display a culture of continuous improvement in everything they do for us, this and their positive approach to every challenge makes them a great partner to work with. ❞